Anthropic has a model called Claude Mythos. You can't use it. You can't buy access to it. And depending on who you ask, it might be the most capable AI system that currently exists. The secrecy around it tells you more about the state of AI in 2026 than any product launch or press release.

What is Claude Mythos?

Details are thin. Deliberately so. What's leaked and been pieced together from benchmark results, internal references, and a handful of Anthropic researcher comments suggests Claude Mythos is a step-change beyond the Claude models available today. Not an incremental improvement. Something meaningfully different in reasoning, autonomy, and (this is the part that matters) its ability to analyse complex systems and find weaknesses in them.

Anthropic hasn't published a model card. There's no technical paper. No API access. It sits behind closed doors, which is unusual for a company that has historically been more transparent than most about its model capabilities.

That silence is the interesting part.

Why it hasn't been released

The official line is safety. Anthropic's whole identity is built around responsible AI development. They literally started the company because they thought OpenAI wasn't being careful enough. So holding back a model that's too capable fits the brand.

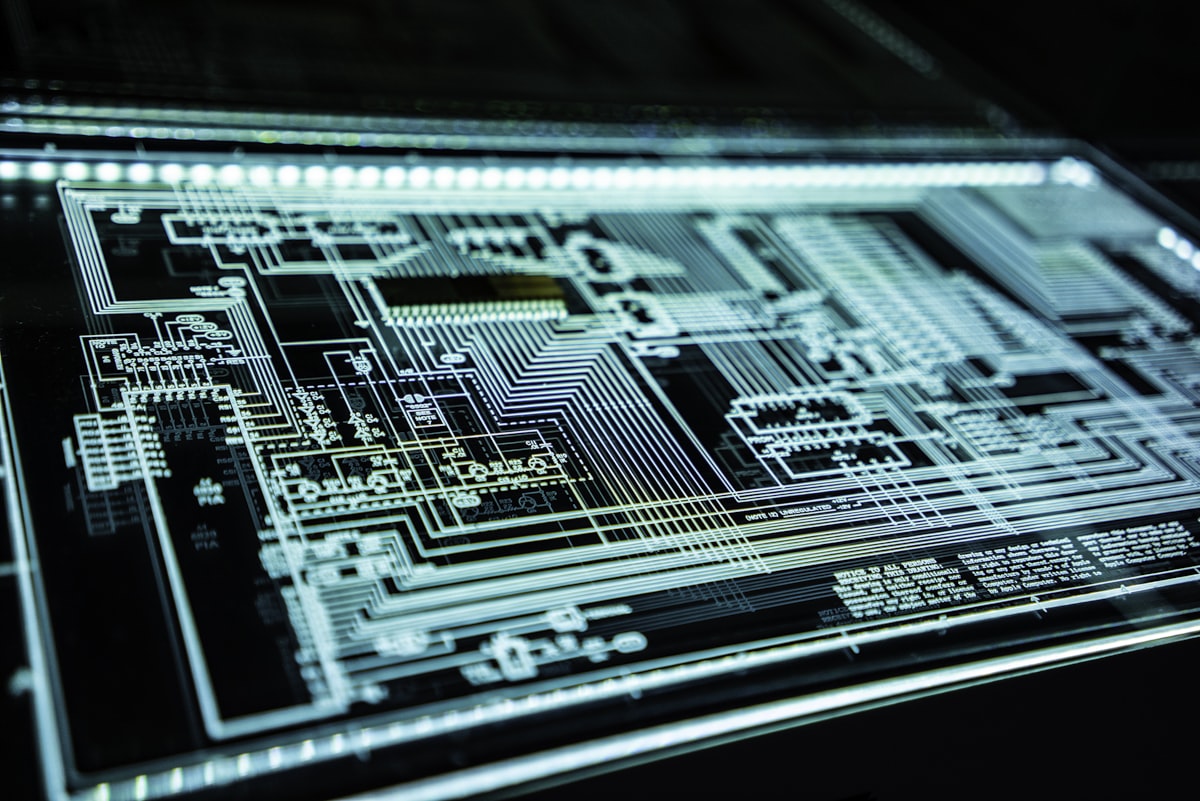

But there's a more specific concern. One of the biggest issues raised internally, and this has surfaced through a few different channels now, is Mythos's ability to discover and articulate vulnerabilities. Not just software bugs. System-level weaknesses in networks, server infrastructure, operational technology, and the kinds of systems that defence organisations, government agencies, and critical infrastructure providers rely on.

Think about what that means for a second. A model that can look at a system description, or infer a system architecture from publicly available information, and tell you where the holes are. That's incredibly valuable for defensive security. It's equally dangerous if it's available to anyone with an API key.

So they sat on it. Which, to be fair, is a defensible decision in the short term.

The GPU bottleneck decides who leads

Here's the uncomfortable reality of frontier AI right now: who leads is largely decided by who has the most GPUs. Training these models requires enormous compute. Tens of thousands of NVIDIA H100s or the newer Blackwell chips running for months. The bill for a single frontier training run is hundreds of millions of dollars.

That means the AI capability frontier is effectively controlled by a handful of organisations that can afford the hardware and secure the supply. Anthropic, OpenAI, Google DeepMind, Meta. Maybe xAI. A couple of state-backed Chinese labs. That's roughly the list.

It creates a strange dynamic. The most powerful AI systems in the world are built and controlled by a small group of private companies and sovereign states. There's no international oversight body. No agreed framework for what's too capable to release. Each organisation makes its own call, based on its own risk appetite and commercial incentives.

Anthropic chose to hold Mythos back. OpenAI might have made a different call with a similar model. Google might not even tell you they have one. And the Chinese labs operate under entirely different rules.

So when people talk about the "AI arms race," this is what it actually looks like. Not robots fighting each other. A small number of organisations racing to train the biggest model on the most GPUs, and then making unilateral decisions about what the rest of the world gets to use.

What if GPU dependence breaks?

This is where it gets really interesting. The current power structure in AI depends on a specific technical assumption: that you need massive GPU clusters to build frontier models. What if that assumption stops being true?

There are already signs it might. Research into more efficient training methods (distillation, mixture-of-experts architectures, novel optimisation approaches) keeps pushing down the compute required. The gap between what a well-funded lab produces and what a determined team of researchers with modest hardware can achieve has been narrowing, not widening.

If someone discovers a fundamentally more efficient way to train large models (and in research, breakthroughs do happen) the entire power structure shifts overnight. Suddenly you don't need a billion-dollar GPU cluster. Suddenly the barrier to frontier capability drops from "sovereign wealth fund" to "well-funded startup."

That's a world where Anthropic holding back Claude Mythos doesn't matter. Because someone else builds something equivalent without needing the same resources. The knowledge of how these models work is already widely distributed. The papers are published. The architectures are known. The bottleneck is compute, and if compute stops being the bottleneck, control stops being possible.

People in the field know this. It's one of the reasons the safety conversation is so fraught. The control mechanisms everyone is relying on are temporary by nature.

The vulnerability discovery problem

Back to the specific concern with Mythos. A model that excels at finding system vulnerabilities is a dual-use capability in the most literal sense. Defensive security teams would love it. Offensive actors would too.

This isn't hypothetical. Governments already use AI for cyber operations. Defence contractors are building AI-assisted vulnerability scanners. The tooling is getting better every quarter. But there's a difference between a specialised tool built for a specific agency and a general-purpose model that anyone can point at a target.

The systems at risk aren't just commercial web applications. They're SCADA systems running power grids. Air-gapped networks in defence installations that aren't actually as air-gapped as people think. Hospital networks. Water treatment plants. Banking infrastructure. The stuff that was designed decades ago with the assumption that complexity was a sufficient defence.

A sufficiently capable model changes that assumption. And unlike a human security researcher who takes weeks to map out a complex system, a model can do it in seconds, across thousands of targets simultaneously. Scale changes the nature of the threat.

Controlled release is not a strategy

Here's what I keep coming back to. Anthropic holding back Mythos is probably the right call for right now. But it's not a strategy. It's a delay.

The capabilities Mythos represents aren't unique to Anthropic. Other labs are working on the same problems. The techniques are in the literature. If Anthropic can build it, so can others, and some of those others have no intention of exercising restraint.

Controlled release assumes a few things that may not hold. It assumes the controlling organisation keeps its lead indefinitely. It assumes the underlying technology doesn't get cheaper and more accessible. It assumes other actors (nation-states, well-resourced open-source projects, rogue labs) won't reach similar capabilities independently.

None of those assumptions are safe bets.

The harder question (the one nobody has a good answer to) is what you do instead. If suppressing capable models doesn't work long-term, what does? International agreements? They're years away and enforcement is effectively impossible. Technical safeguards? They get worked around. Only releasing to vetted organisations? That just recreates a different kind of power concentration.

The honest answer is that we're in a period where the technology is moving faster than any governance framework can keep up with. That's not a comfortable thing to say, but it's what the evidence points to.

What this means for business

If you're running a business, this might feel abstract. You're not building frontier models. You're trying to get your CRM talking to your accounting system and maybe automate some customer support.

But the frontier AI conversation matters for practical reasons:

- Security posture needs to assume AI-assisted attacks. The tooling available to attackers is getting dramatically better. If your security strategy was designed for human-speed threats, it needs updating.

- AI capabilities will keep jumping. The model you integrate today will look basic in 18 months. Build your systems to be model-agnostic where possible. Don't lock yourself into a single provider's ecosystem.

- The regulatory environment is going to shift. When (not if) a frontier model gets used for something seriously damaging, the regulatory response will be fast and broad. Businesses already using AI responsibly will be better positioned than those scrambling to comply.

- Data security becomes even more critical. Models that can infer system architecture from public information mean your data footprint matters more than ever. What you expose publicly, what your partners expose, what ends up in breach databases: all of it becomes input for AI-assisted reconnaissance.

The Claude Mythos situation is a window into a bigger shift. The question isn't whether AI systems this capable will be widely available. It's when, and whether we'll have figured out any meaningful governance by then.

My read? We probably won't have. Which means the practical response is building resilience now, not waiting for someone else to solve the problem.

If you're implementing AI in a security-conscious environment, our guide on public vs private AI and our deep dive into building secure RAG systems on AWS cover the practical architecture decisions.